Howdy,

Kubernetes is the dreamed orchestration tool for any developer. However, it could be a nightmare for security guys (get online images make them cringe). After all, K8s manages containers with apps and databases connected to an overlay network. Containers could have sensible information. They could be exploded and used to attack internal/external assets.

Nuage will help to microsegment containers and even put security instances in the middle to analyze traffic. A overall network management and their settings. Manage forwarding and control rules. Manage Public/WAN segment to publish your apps and to control bandwidth utilization.

The best thing! All those settings can be managed thru automated policies. You do not lose any agility.

Scalability is also a known issue. Customers managing 10000s of instances using a unique VSD instance as Global console. The usage of a lightweight resource as openflow is the evidence (Most “SDN” solutions use XMPP to program vswicthes or vrouters).

Containers scales in seconds. Does your security and network do so?

Next you will find a step-by-step to install your Kubernetes cluster with Nuage

Nuage Core solution

Nuage VSD and VSC have to be installed in advance. VSD is being serving at 10.10.10.5:8443 and VSC is installed at 10.10.10.6 in my case.

Also, I have a dns/ntp server for my lab. Even the K8s nodes have their CNAMEs and reverses. I am using my dns as web server to publish the rpm files.

Prepare your installation

I’ve done a fresh install of my two servers (check my previous post)

Then, we need to publish Nuage rpm files into any http server. And the prepare our ansible/git server (I would be the same k8s master in this case)

Don’t forget both servers as to be accessed thru ssh with no password at all (you will have to add ansible node’s public keys into authorized_server files into both servers)

rpm -iUvh http://dl.fedoraproject.org/pub/epel/7/x86_64/e/epel-release-7-8.noarch.rpm yum -y update yum -y install ansible yum -y install git yum -y install python-netaddr

Synchronize to your ntp server and change the localtime zone to your current

Normalize your hostname vars

[root@k8scluster ansible]# HOSTNAME=k8scluster.nuage.lab [root@k8scluster ansible]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 10.10.10.17 k8scluster.nuage.lab k8scluster [root@k8scluster ansible]# cat /etc/hostname k8scluster.nuage.lab [root@k8scluster ansible]# hostname k8scluster.nuage.lab

Clone Nuage files:

git clone https://github.com/vishpat/contrib cd contrib git checkout origin/nuage -b nuage

You will get something like this:

[root@k8snode01 ~]# git clone https://github.com/vishpat/contrib Cloning into 'contrib'... remote: Counting objects: 23650, done. remote: Compressing objects: 100% (3/3), done. remote: Total 23650 (delta 0), reused 0 (delta 0), pack-reused 23647 Receiving objects: 100% (23650/23650), 27.79 MiB | 12.87 MiB/s, done. Resolving deltas: 100% (10400/10400), done. Checking out files: 81% (10289/12702) Checking out files: 100% (12702/12702), done. [root@k8snode01 ~]# cd contrib [root@k8snode01 contrib]# git checkout origin/nuage -b nuage Branch nuage set up to track remote branch nuage from origin. Switched to a new branch 'nuage'

Installing K8s with Nuage in a few steps

Create ansible.cfg inside ~/contrib/ansible

# this ~/contrib/ansible/ansible.cfg file [defaults] # Set the log_path log_path = /var/log/ansible.log [ssh_connection] pipelining = True

Create your inventory nodes file ~/contrib/ansible/nodes

# Inventory file ~/contrib/ansible/nodes # Create an k8s group that contains the masters and nodes groups [k8s:children] masters nodes [k8s:vars] ansible_ssh_user=root vsd_api_url=https://10.10.10.5:8443 vsp_version=v4_0 enterprise=K8s_Lab domain=Kubernetes03 vsc_active_ip=10.10.10.6 uplink_interface=eth0 nuage_monitor_rpm=http://10.10.10.2/Kubernetes/RPMS/x86_64/nuagekubemon-4.0-3.20.el7.centos.x86_64.rpm vrs_rpm=http://10.10.10.2/Kubernetes/RPMS/x86_64/nuage-openvswitch-4.0.3-25.el7.x86_64.rpm plugin_rpm=http://10.10.10.2/Kubernetes/RPMS/x86_64/nuage-k8s-plugin-4.0-3.20.el7.centos.x86_64.rpm # host group for masters [masters] k8scluster.nuage.lab [etcd] k8scluster.nuage.lab # host group for nodes, includes region info [nodes] k8scluster.nuage.lab k8snode01.nuage.lab

Execute playbook

cd contrib/ansible ansible-playbook -vvvv -i nodes cluster.yml

This is the final result I’ve got:

PLAY RECAP ********************************************************************* k8scluster.nuage.lab : ok=303 changed=51 unreachable=0 failed=0 k8snode01.nuage.lab : ok=102 changed=36 unreachable=0 failed=0

Check K8s cluster settings:

[root@k8scluster ~]# kubectl cluster-info Kubernetes master is running at http://localhost:8080 Elasticsearch is running at http://localhost:8080/api/v1/proxy/namespaces/kube-system/services/elasticsearch-logging Heapster is running at http://localhost:8080/api/v1/proxy/namespaces/kube-system/services/heapster Kibana is running at http://localhost:8080/api/v1/proxy/namespaces/kube-system/services/kibana-logging KubeDNS is running at http://localhost:8080/api/v1/proxy/namespaces/kube-system/services/kube-dns Grafana is running at http://localhost:8080/api/v1/proxy/namespaces/kube-system/services/monitoring-grafana InfluxDB is running at http://localhost:8080/api/v1/proxy/namespaces/kube-system/services/monitoring-influxdb

Start your Nuage Kubernetes Monitor service:

service nuagekubemon start

================== Begin update Sept 22, 2016 ================

If you want to use services thru kube-proxy (like service type=loadbalancer). We have to change /etc/kubernetes/proxy settings in all nodes as follow. The reason? Instead iptables, Nuage is managing security settings now.

#/etc/kubernetes/proxy KUBE_PROXY_ARGS="--kubeconfig=/etc/kubernetes/proxy.kubeconfig --proxy-mode=userspace"

================== End update Sept 22, 2016 ==================

Launching an App

Finally, Let’s launch soemthing:

[root@k8scluster ansible]# kubectl run my-nginx --image=nginx --replicas=2 --port=80 deployment "my-nginx" created [root@k8scluster ansible]# kubectl get po NAME READY STATUS RESTARTS AGE my-nginx-3800858182-r0om7 0/1 ContainerCreating 0 2m my-nginx-3800858182-xtffj 0/1 ContainerCreating 0 2m [root@k8scluster ansible]# kubectl get po NAME READY STATUS RESTARTS AGE my-nginx-3800858182-r0om7 1/1 Running 0 6m my-nginx-3800858182-xtffj 1/1 Running 0 6m

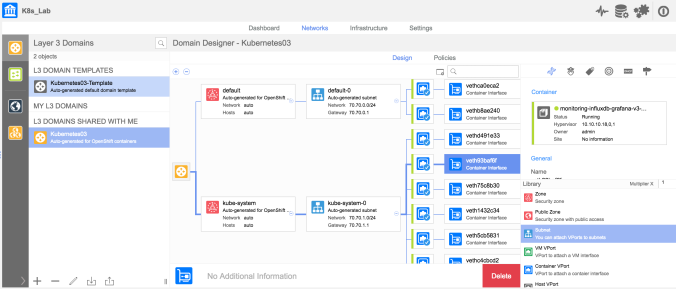

What we have in our VSD GUI: You will see containers from K8s cluster and nginx

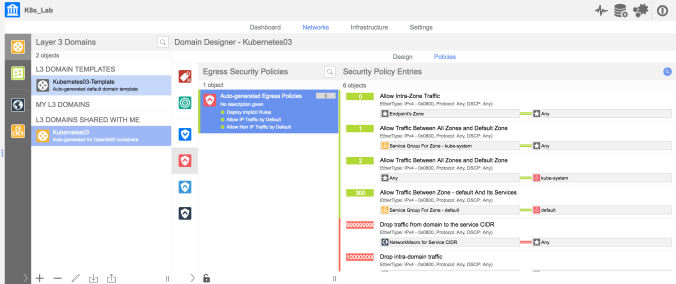

Now, you’ll see Security policies in a more human interface.

See ya!

Pingback: KubeWeekly #50 – KubeWeekly

Pingback: Prepare your #Kubernetes Cluster with #Ansible and #cloud_init – Tricky Deadline